With the release of ESXi 4.1 the bnx2i driver is included. The bnx2i drivers is loaded after bnx2 (the driver for the Broadcom NetXtreme II NICs).

bnx2i is the driver that enables the hardware dependant iSCSI initiator functionality of the broadcom 5709 single/dual/quad port NICs (including internal NICs in servers such as the Dell R710).

The broadcom 5709 NICs are both TOE and ISCSI offload NICs.

Brilliant so we can remove the CPU overhead of the software iSCSI inititator and use these hardware dependant iSCSI initiators. But there is one small caveat with this... Jumbo Frames do not work and are not supported on broadcom 5709 HW dependant initiators.

See http://www.delltechcenter.com/thread/4100544/vSphere+4.1+upgrade

Initial thoughts then lead to...

Software iSCSI Initiator with Jumbo Frames vs Hardware dependant iSCSI Initiators without Jumbo Frames

...which performs best?

To decide this I performed some tests...

The Test:

The server is a Dell R710, storage an EqualLogic PS6500X, all connected by Cisco 3560G switches.

First the VMware software iSCSI initiator was configured with jumbo frames as per my previous post Multiple VMkernel NICs, Round Robin MPIO - DVS and Jumbo Frames

Several virtual machines were running on the iSCSI storage and the results were monitored.

I then changed it to use the Broadcom 5709 hardware dependant iSCSI initiator without jumbo frames, continuing to monitor the results.

Finally I changed it back to software iSCSI initiator with jumbo frames.

The Results:

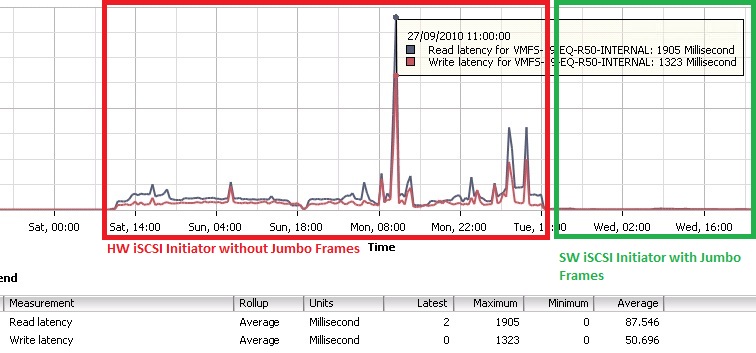

Here you can see the virtual machine in this example with hardware initiator and 1.5k frames was suffering read and write disk latency.

There was a noticable difference in the performance of the guest OS and application.

In addition the metric results (seen below) show a 1905 millisecond read latency and 1323 millisecond write latency.

However when changed back to software initiator and 9k jumbo frames the disk latency drops to between zero and 2 milliseconds.

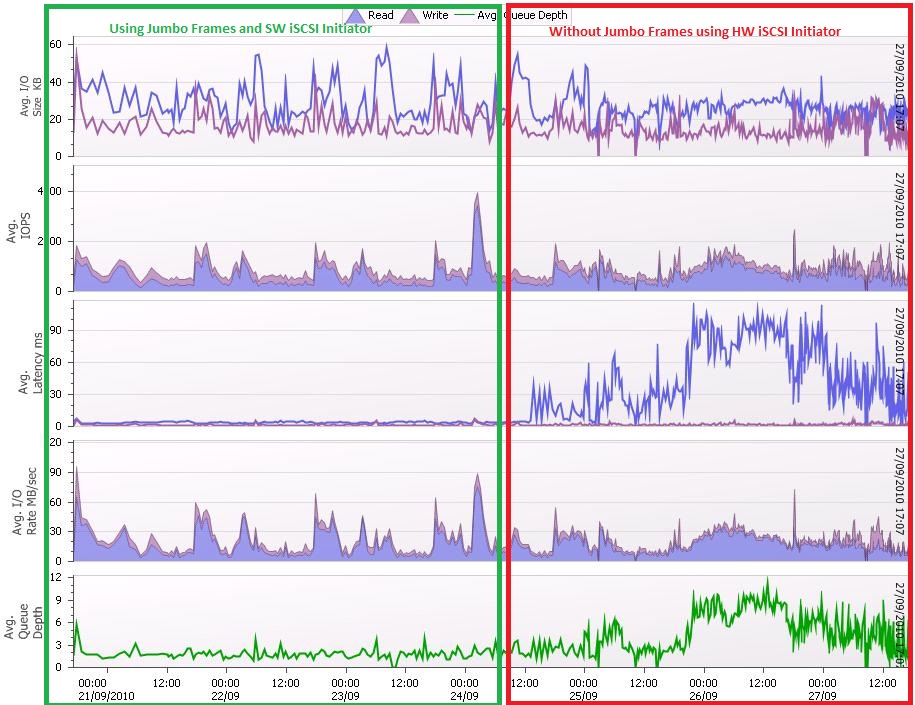

Additionally SAN HQ (that comes with Dell EqualLogic) provides additional confirmation and insight at the storage system level in regards to changes in perfomance.

In the below performance graphs it is clear to see that as soon as the switch from software initiator with jumbo frames to hardware initiator without jumbo frames is made, the difference is clear!

The most noticable is disk latency which has increased 10 fold.

Secondly, the queue depth has more than doubled.

It also had an affect on the IO size, which has reduced due to the smaller frame size.

My findings are that the gain of performing iSCSI offload using the Broadcom 5709 hardware dependant iSCSI initiator is actually is massively disproportionate to the gains of using jumbo frames.

Even though there is still the overhead of the software iSCSI initiator, it is jumbo frames that improve performance.

While the hardware initiator is removing the overhead that the software initiator presents, the gain is negligible given the fact jumbo frames cannot be used with this boradcom hardware dependant initiator.

So my advice is do not use the broadcom hardware dependant iSCSI initiator.

Instead use the VMware iSCSI software initiator along with jumbo frames.

Share this blog post on social media:

TweetLatest Blog Posts

- vSphere 7 U1 - Part 3 - Creating a Datacenter, HA/DRS Cluster and Adding a Host

- vSphere 7 U1 - Part 2 - Deploying vCenter 7.0 U1 VCSA

- vSphere 7 U1 - Part 1 - Installing ESXi 7.0 U1

- Veeam CBT Data is Invalid - Reset CBT Without Powering Off VM

- View Administrator Blank Error Dialog/Window After Upgrade